Practicing lawyers . . . have a few important specialized skills that make their insights particularly relevant to artificial intelligence as AI stands upon the precipice of mass-incorporation into our daily lives.[i]

Imagine that John Smith goes to a lending website to borrow $20,000 to start a new business, or to remodel a home office, or to pay off credit-card debt at a lower interest rate. The information provided through the online application is fairly limited: legal name, Social Security number, address for the last 10 years, level of education, occupation, etc. Thereafter, the online system takes the captured information and crunches it with the underwriting algorithm against additional internal data to evaluate John’s loan request and credit worthiness. What exactly is the algorithm doing to evaluate the risk of underwriting this particular loan?

In the example, algorithms are used to minimize human intervention, evaluate risk, and provide the potential borrower an answer and maybe a loan as quickly as practicable. In addition to the limited information the borrower must input into the online application, the algorithm also looks at an abundance of database information, much of which may not be specifically related to the borrower. Based on address, for example, the algorithm may reference the credit scores of individuals living in the same area code. This information—both explicitly provided by the borrower and aggregated from other sources based on the information provided—is used by the algorithm to determine whether the risk associated with the loan makes business sense.

In theory, algorithms should prevent bias by removing the human component of assessing data sets. If mathematical equations are assessing risk, rather than fallible human beings with their own respective and sometimes unconscious biases, the threat of bias should decrease, right? What if your algorithm nonetheless discriminates? What if the algorithm in our example decides that women age 21–35 are poor risks and deny most of these loan requests? Does it matter if they turn out to be mostly Hispanic? What if the algorithm decides that women are higher risk than men based upon bankruptcy data and loan delinquencies? What if people from a certain zip code have lots of bad debt, and the algorithm refuses to loan money 95 percent of the time to people in that area? Does the analysis change if the lion’s share of people in that neighborhood are immigrants from Nigeria?[ii]

Hypothetically, none of that should matter because the algorithm is “blind” to these facts. Math doesn’t have a heart or feelings, or even know what it is to be brown or white. If algorithmic decision-making is integral to a business process (or if a business is headed in that direction), lawyers need a deeper understanding of AI and how it does what it does.

What Is AI?

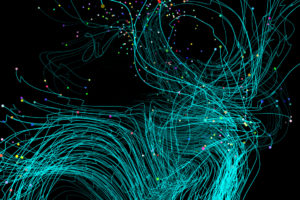

Artificial intelligence (AI) is an area of computer science that emphasizes the creation of intelligent machines that attempt to mirror the cognitive processes of humans, but much faster, albeit in limited ways at this point. AI techniques make it possible for vast amounts of information to be analyzed very quickly, and AI allows connections and patterns to be unearthed or developed, outcomes predicted, and answers provided to various kinds of business problems. AI can be an amalgamation of several different tools (like machine learning, natural language processing, deep learning, chatbots, etc.) which may rely upon varied and layered algorithms to perform a targeted analysis.

Usually, the information that AI analyzes is so voluminous that it would be impossible for humans to meaningfully process it. Sometimes, humans teach the algorithm what to do by showing what “right” and “wrong” looks like (supervised machine learning), and other times it learns by itself (unsupervised machine learning). All of AI and its progeny in one way or another rely on various complex mathematical equations (the algorithms) to do their work. It seems neutral enough, until you remember that it is people (fallible humans) who set up the search queries, provide the inputs, and guide and manage AI. This human guidance can and does impact what AI tools do and is central to the question of how algorithms discriminate.

The Dirty Little Secret of AI: Algorithms Can Discriminate

It is a common misperception that AI technologies operate objectively. At the click of a mouse thousands or millions or billions of sets of data (often called Big Data) are sliced, diced, connected, deconstructed, aggregated, and analyzed for patterns, connections, meaning, and a decision point (like whether John Smith in our example should be approved for a credit card or loan). Although innumerable data points pass through what we acknowledge is the “AI black box” (appropriately named because little is truly knowable about what the algorithm does, discussed in depth below), we cling to the notion that AI is making an “autonomous” (and therefore legal and nondiscriminatory) decisions regardless of the outcome.

However, there is evidence that some algorithms discriminate or at least treat certain characteristics unfairly. There are two ways a company or an algorithm a company uses might discriminate against someone: either in an outright/direct manner, or using a process that has a disparate impact on legally protected classes of people. Both are illegal.

High-profile examples are already popping up. For example, Bloomberg reported that a Wall Street regulator opened a probe into Goldman’s credit-card practices after a viral tweet from a tech entrepreneur alleged that the Apple Card’s algorithms discriminated against his wife. Now another high-profile user of the Apple Card, Apple co-founder Steve Wozniak, is calling for the government to get involved, citing excessive corporate reliance on mysterious technology. The law forbids discrimination, by algorithm or otherwise, and that prohibition can be implemented by regulating the process through which AI processes are designed.

What Has AI Discrimination Looked Like?

The math that underpins AI is incredibly complex and nearly impossible for the average person to fully comprehend. Although businesses regularly rely on AI, it is not readily understood how AI does what it does or how it ultimately comes to specific results. That lack of insight—the “black box” conception of how data was sliced or diced by the algorithm—is not helped by the lack of a paper trail detailing how AI makes decisions. We do know algorithms can and do discriminate, however, and we are beginning to understand how.

Often the inputs (like training data, guidance by people, etc. ) cause the problem. Although people could set up the AI application purposefully to weed out certain people or groups, most likely AI discrimination will be indirect or “unintentional,” and this unintentional, algorithm-driven discrimination is the focus of our guidance.

AI Is a Seismic Shift in Business Decision-Making

AI is not only here to stay, but it is likely to lead a sea change in improved processes and business operations.[iv] AI is not just a new computation or analytical tool, it is a completely new way of doing business. Given that computers are so much better and faster at processing certain types of information than humans, the capability of AI goes far beyond that which humans were able to process in a pre-AI world. A case in point is the Human Genome Project, which was successful only because AI did all the real work; people simply didn’t have the capacity to analyze all the information to connect billions of data points, making sense of the aggregated data. Algorithms that do the “heavy lifting” of data analysis are very powerful and capable of answering many business questions better than human beings. As this major shift takes place across industries, responsible business leaders should anticipate the potential adverse effects of embedding AI in a business, particularly with regard to compliance and potential legal risk. In other words, AI will continue to drive great and productive change in every industry, but lawyers must figure out how to better guide the use of AI.

The Path Forward

Given the power, utility, and potential for risk and liability of AI, here are some concrete steps all businesses should consider when embedding more business processes with algorithmic tools:

1. Pick the right Big Data stewards because they can affect AI outcomes. Going forward, larger companies will likely use AI in different aspects of its operations; companies should therefore consider appointing a chief AI officer. Although it may not be reasonable for a single person to understand it all, there ought to be a single executive to whom periodic reporting is made and who has overall oversight for AI’s deployment within the company.

AI is good for business, but it requires great AI leadership, and even that is not enough. To get ahead of any potential claims for discrimination, companies should bring a multidisciplinary team to develop and implement AI processes, plans, and projects.

2. Build a diverse team. The conventional wisdom for building good AI projects is to also build diverse teams that understand the data inputs and help ensure discrimination is less likely. “If diverse teams do the coding work, bias in data and algorithms can be mitigated. If AI is to touch all aspects of human life, its designers should ensure it represents all kinds of people.”[v]

3. Make lawyers and compliance professionals part of the AI team. Transparency in the AI process (as much as practicable), and thus defensibility from claims of discrimination, requires upfront involvement and guidance from lawyers and compliance professionals as part of the AI team. Regulators and courts may expect some transparency in the upfront decisions and implementation of processes to clear claims of discrimination, and that needs the upfront guidance of lawyers.

4. Make privacy a part of the solution. When customers’ data or personal identifiable information (PII) is involved in an AI process (which will most often be the case), it will be useful to get the privacy officer (or someone primarily responsible for privacy) engaged as well. Such early involvement will not only address any business process-specific privacy issue, but can help ensure consistency in the way the company uses PII across different AI applications.[vi]

5. Don’t rely on or accept as a fait accompli the “black box” and lack of AI transparency. Although AI and related tools are inherently complicated, the black-box defense should not be relied upon to combat any future claims of discrimination (after all, ignorance is not a defense).[vii] Rather, you should seek to understand what the AI tool is doing (and if you can’t, find someone who can) and document that use of the tool was structured in a way to get a fair result. Further, it makes sense to anticipate potential points of attack on the system upfront so you can help preempt future claims. Demand AI “explainability,” which means, among other things, documenting what decisions were made and why.

Similarly, given that many companies use AI technology that is provided by a third party, claiming that a third-party consultant is responsible for the company’s AI projects is also unlikely to be a viable defense. It is imperative that your organization understand and test any third-party technology because you likely will not be able to transfer liability to the vendor if discrimination occurs (negotiate a thoughtful contract, but don’t expect it to insulate for discrimination). The EEOC has provided guidance on employment hiring tests that is likely applicable to AI technology purchased from a third party: “[w]hile a test vendor’s documentation supporting the validity of a test may be helpful, the employer is still responsible for ensuring that its tests are valid.”[viii] Much like the need to understand the upfront decision impacting the AI project, you must understand and review what third parties are doing for your organization.

6. The output is only as good as the input. AI tools crawl through data and make connections. Sometimes those connections can have a “disparate impact” on a protected class of people or just appear to be unfair (both of which may invite legal challenges). If the tool is selecting or deselecting a protected group uniformly, this may be a sign of a potential discriminatory issue within either the data inputs or the process by which the data is analyzed. Thinking through and discussing (with the lawyers and possibly compliance team members) the scope, type, and input of data prior to launching the AI program, while being mindful of a discriminatory bias the tool may have, is prudent. That will likely require more upfront work, but given the potential for claims of discrimination and their impact, companies are well-served to redouble efforts to get this right up front. So, select carefully, analyze thoroughly, document the vigilance to promote transparency in an opaque process, test the tool, and verify results.[ix] “If a company wants to make its business fit for AI, it has to fulfill several criteria, It has to be skilled in data and understand, how data is collected, cleaned and prepared, for AI processing . . . .”

7. Determine organizational comfort with AI “predictions.” It requires a leap of faith to shift decision-making from a person to an algorithm. In that regard, organizations must understand that a decision is made not necessarily on facts exclusively related to the issue, but that an algorithm is also analyzing data from other sources that help conclude, for example, who will be best for the job or to what application to grant insurance coverage. In that sense AI can “predict” outcomes and answers based on an archetype.[xi]

8. Determine how much “rightness” or certainty is needed in AI. Work with the dedicated AI team to determine what percentage of “rightness” or certainty is needed for the company to take action. Issues around false positives or false negatives, or a precision score that indicates the likelihood of “correctness,” may be a part of the algorithm. In other words, using AI tools for business decisions must be explored and understood upfront. Lawyers should ask questions about what can be known about AI technology and document required outcomes early on in the project so the company can defend its AI process later if need be.

9. Test the AI tool to ensure it is not discriminating. Employers should test their AI tools and algorithms often to get their AI tool and corresponding algorithms “to the stage where AI can remove human bias, and . . . to know where humans need to spot-check for unintended robot bias.” Our recommendation is not to test your AI against an existing system, which may also be biased, but to statistically validate your AI by a data scientist—ideally one operating at the direction of an attorney so that the work remains privileged.

10. People should make the really important decisions. As powerful as AI tools are (and they are only getting better) for important business decisions, people should make the final decisions. Letting an AI tool “run wild” or decide major issues for the company is not prudent. This is why we don’t let the drone software assassinate terrorists without a human agreeing that the chosen target meets military requirements.

Conclusion

The legal and business landscape for AI and predictive algorithmic decision-making is evolving in real time. As we move forward into this brave new world of AI, regulators, stakeholders, company leadership, and legal departments will be playing catch-up. Despite the complex math that underlies the technology, lawyers are uniquely positioned to add value to a company’s Big Data or AI team. With a working understanding of how the technology functions, and knowledge of the potential for bias or disparate impact, lawyers should be able to bridge the gap between the technology and legal space.

Business leaders and lawyers alike should work to remain abreast of new developments in the regulatory space as it relates to AI and related technologies. If an AI/Big Data team is in place, individuals should be dedicated to observing, understanding, and summarizing technical and regulatory developments for the rest of the team.

Businesses that position themselves as proactive in the face of new regulations, laws, and best practices will be better prepared to benefit from and defend their AI practices in this complex new world where math rules the day.

[i] Theodore Claypoole, The Law of Artificial Intelligence and Smart Machines (ABA 2019).

[ii] Daniel Cossins, Discriminating algorithms: 5 times AI showed prejudice, New Scientist, Apr. 12, 2018 (“Artificial intelligence is supposed to make life easier for us all—but it is also prone to amplify sexist and racist biases from the real world.”).

[iii] “…AI can result in bias by selecting for certain neutral characteristics that have a discriminatory impact against minorities or women. For example, studies show that people who live closer to the office are likelier to be happy at work. So an AI algorithm might select only resumes with certain ZIP codes that would limit the potential commute time. This algorithm could have a discriminatory impact on those who do not live in any of the nearby ZIP codes, inadvertently excluding residents of neighborhoods populated predominantly by minorities.”

[iv] Daniel Cossins, supra note 3 (“Modern life runs on intelligent algorithms. The data-devouring, self-improving computer programs that underlie the artificial intelligence revolution already determine Google search results, Facebook news feeds and online shopping recommendations. Increasingly, they also decide how easily we get a mortgage or a job interview.”).

[v] Anastassia Lauterback, Introduction to Artificial Intelligence and Machine Learning in The Law of Artificial Intelligence and Smart Machines (Theodore Claypoole, ed.).

[vi] Camerion F. Kerry, Protecting privacy in an AI-driven world (“As artificial intelligence evolves, it magnifies the ability to use personal information in ways that can intrude on privacy interests by raising analysis of personal information to new levels of power and speed.”).

[viii] The U.S. Equal Employment Opportunity Commission, Employment Tests and Selection Procedures (“These [AI] tools often function in black boxes—meaning that they’re proprietary and operated by the companies that sell them—which makes it difficult for us to know when or how they might be harming real people (or if they even work as intended). And new AI-based tools can also raise concerns about privacy and surveillance.”).

[ix] We also recommend doing this now, even if you have an AI system currently in use.

[x] Anastassia Lauterback, supra note 6.

[xii] https://www.shrm.org/ResourcesAndTools/legal-and-compliance/employment-law/Pages/artificial-intelligence-discriminatory-data.aspx